I want to make it clear from the beginning that I am in fact an AI hater. But, I also use it in absurd doses that might prove unhealthy. I tinker, I look for new ways to optimize my setup with the latest and greatest in terms of skills, subagents, hooks and token savers (have you tried the “keep everything in sqlite” strategy yet?). I’m also playing with open source LLMs on local hardware.

What is this all about?

There are some issues or problematic questions that I think are ignored or not emphasized enough about the usage of these tools. So, here we go.

Why learn?

This question was, to a certain extent, applicable to our recent past, when you could just google things instead of needing to know them by heart. However, this required some “tedious” research of finding the thing, processing the information and making sense of it. For anything more than trivial, it demanded at least a tiny bit of involvement. Because an exact answer to your specific situation was relatively unlikely, you had to put in the hard work to connect the dots. Sweat = learning.

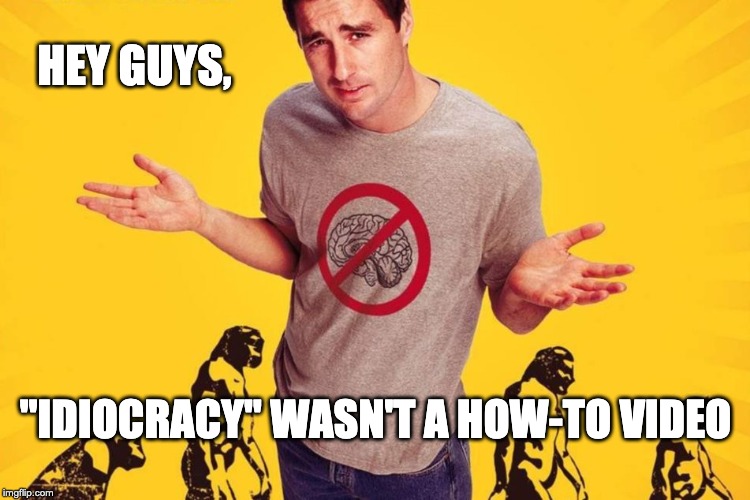

The current events must be a huge issue for education. We don’t have to do the research and processing any more and most importantly, we no longer have to connect the dots. We don’t have to suffer or sweat for it. Almost everything is easily available, just one prompt away. Learning is a hobby at this point.

The anxious generation had a good analogy for what happened to my generation when smartphones and (anti)social media came along: it was like sending us all to Mars to see what happens. Now with AI, I can only wonder what kind of humans will our successors be? Will they be able to fix our mistakes and improve on what is, in order to give a smoother ride to all the souls during their extremely short, 4000 week life on earth? Or will they turn out to be even more anxious?

Why think?

As we offload more and more of the work to the machines, we eventually start to entrust it with small life decissions. Content with the machine’s answers we start giving it more agency. It is only natural for humans to go for the path of least resistance. How much will it take to surrender cognition to the machine? Do we even want to find out?

Full cognition offload might or might not happen, but for now we have the “AI psychosis” thing going on causing trouble. Not really a replacement of cognition, but the alteration of it to the point of getting in a “romantic” relationship with chatbots or the belief that you are, in fact, super special and smart. The AI was built to make you feel this way and not to challenge your assumptions and ideas. Social media algorithms were already creating echo chambers feeding you content you will likely approve of, AI is merely the natural next step. It is a constant attack on critical thinking, how long are we going to tolerate it?

Privacy

Obviously, most people don’t really care about this. Everyone I talk about privacy with laughs it off with the classic “I’ve got nothing to hide!”. My answer is always something along the lines of Snowden’s quote:

“Ultimately, arguing that you don’t care about the right to privacy because you have nothing to hide is no different than saying you don’t care about free speech because you have nothing to say.”

A good example of this is the OpenClaw delusion happening in China, in which tech companies help normies install these, ultimately very dangerous, tools. This is not just a privacy issue but also a security one. Having an AI agent with full permissions running on your laptop is inviting trouble. I hope they are not logged into their bank accounts in the browser. They probably have the credentials saved in it, so it doesn’t matter anyway. Fuck!

The current state of things is clearly being a massive, large scale data collection and surveillance operation. And this is beyond the things we enjoy, the apps we use, the people we follow and the products we are likely to buy. This is direct, free access to our own minds. Moreover, the data is not just simply stored somewhere encrypted like in the old days. All of it is certainly being used to train new models. Someone else’s personal issue might just be given as an example when discussing my own internal turmoil with the LLM, who knows?

Pressing on the matter, Anthropic is now asking users for ID verification, which is an interesting move. Especially since their ID verification 3rd party app seems to have Peter Thiel (the guy behind the mass surveillance company, Palantir) as a major investor. Probably just a coincidence guys, nothing to worry about!

The rich can’t grow tired of winning

One can ask himself who benefits from this whole mess of a gold rush. And the answer is of course, the pickaxe sellers. Surely, some lucky few will manage to have a few shiny coins happen to them. But the actual winners are the already rich. The way I see it, for the working class, there are only two angles to using AI:

- keeping their job for as long as they can in these crazy times, while giving away their mind and soul to the AI tech bros. One could argue they were already giving their minds and souls to their employers and that is indeed true for some. The difference is that before, it was making them (in many cases) better, smarter and more valuable, whereas now it is mere survival.

- trying an idea they had and didn’t have the time/confidence/strength to do before. Can work if they are very lucky, so this might be a good trade off. These are the “lucky few” I mentioned above.

The rich are getting richer, as that’s how capitalism works, whilst the working class can afford less and less with every year that passes by. Meanwhile, companies with record high profits fire thousands of people like it is nothing. The cherry on top is that some employers don’t even provide you with the tools, they just expect of you to be LLM-fast.

Closing thoughts

It feels strange to me how we keep using, and improving by doing so, the very tools that will enable some select few to take everything from us in the process. This is definitely understandable if seen as an addiction. Working with an “agent” is basically a fancier way of playing slots: it has the dopamine rush, the spinning, the flashy colors and the near misses at the end that always demand “just one more” prompt.

What future are we so desperately accelerating towards? What kind of society are we building for ourselves and those to come? How far are we willing to go for Capital? And is this the only way forward?